What are you supposed to do when you find a GPU in DMZ? Are there missions tied to it or what? : r/Warzone

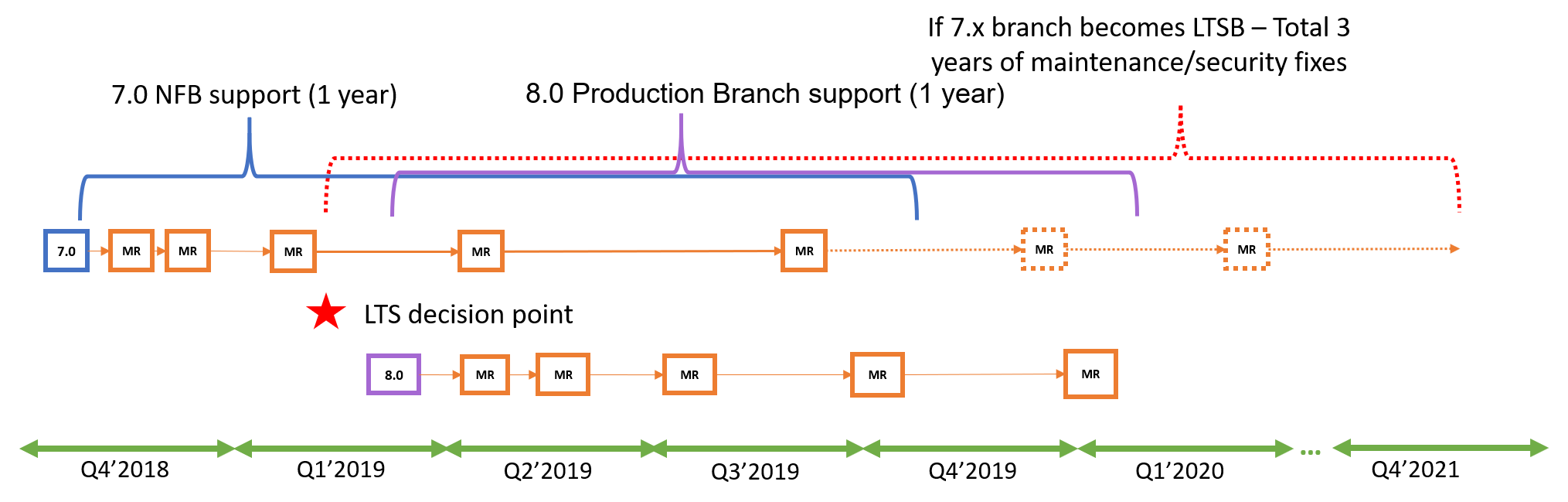

NVIDIA Virtual GPU Software Lifecycle Policy (January 21, 2021) :: NVIDIA Virtual GPU Software News and Updates

gpu operator does not reconcile cluster policy to update managed daemonsets · Issue #186 · NVIDIA/gpu-operator · GitHub

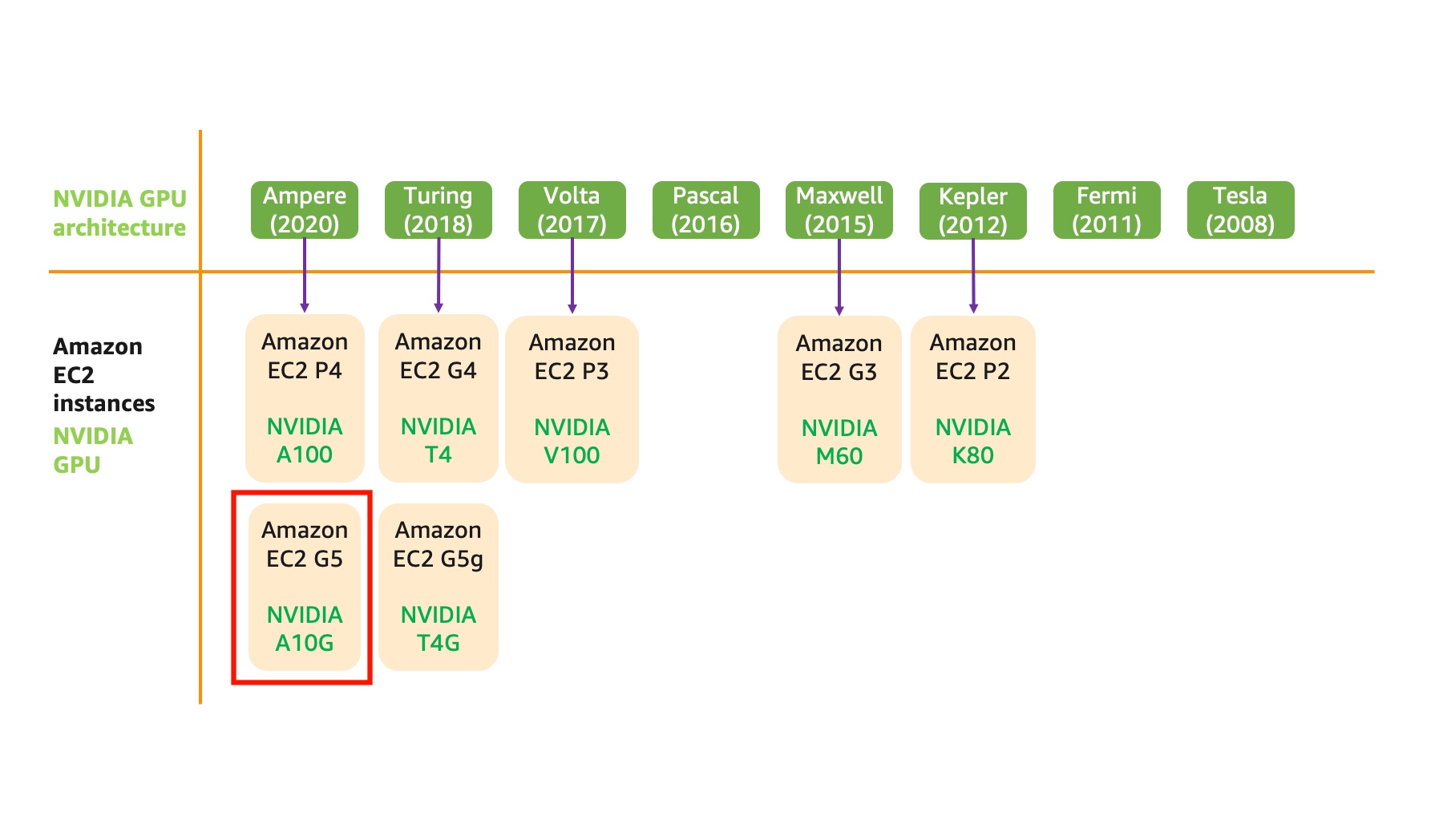

Shashank Prasanna on X: "You want the best perf/cost, single-GPU instance for training/inference: g5.xlarge Based on latest Ampere architecture, NVIDIA A10G GPU has 24GB memory. This option is for the most of